When Storytelling Isn’t Enough

I’ve been thinking about the difference between storytelling and story-showing. When we tell a story, we hand the images to the listener’s imagination. That can be powerful. But sometimes people can’t visualize what’s being told, and the story loses its impact. Or when you’re presenting to a group, everyone forms a different image in their heads. That divergence might be exactly what you’re after. But if the goal is to get everyone in the room sharing the same vision, storytelling alone may not be enough.

That’s the idea I’ve been developing: instead of only describing what a user story or human story means, I want to show it visually, in a way that lands the same way for everyone in the room.

The Gap Between Intention and Execution

Over the years I’ve read several books on visual storytelling and have applied those ideas with some success. People have told me directly that my drawings helped drive points home. One of those books, See What I Mean, paired with User Story Mapping, made me want to draw comic strips as a way of showing the stories I was telling. But even spending minimal time on drawings takes longer than I can afford.

I’ve been wanting to use AI tools to close that gap. My first attempt was a couple of months ago, after watching a video where someone showed a Gemini Gem that creates a storyboard from a story and feeds it into NotebookLM, and uses the slide deck creation feature to produce comic book pages. I experimented with that. The results were okay, but not quite what I was aiming for.

What I Actually Want

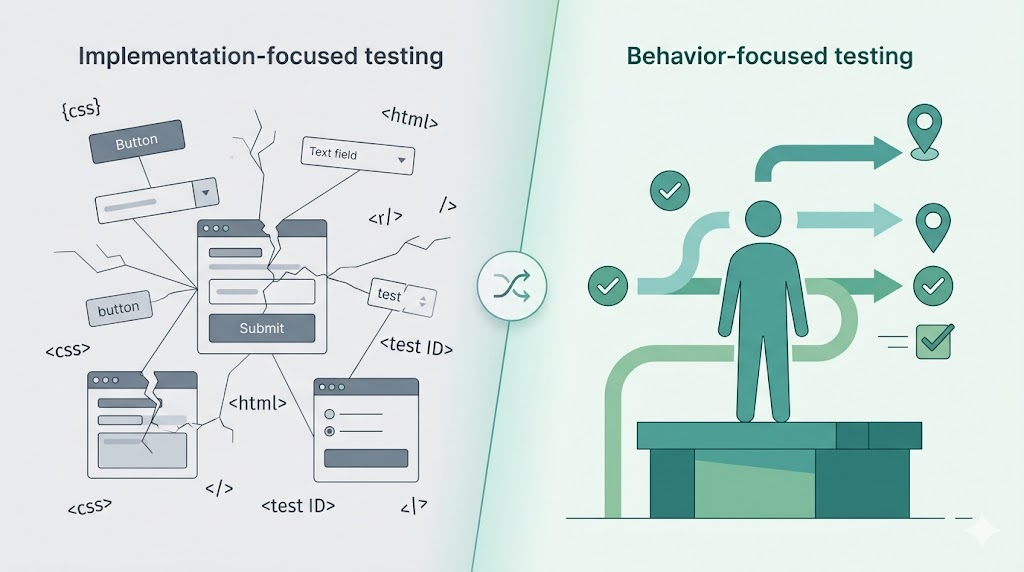

My goal is to take human stories (written in the “In Order To / As a / I Want To” format, with acceptance criteria and Given/When/Then scenarios), which I think of as “cinematic style”, combine them with personas (their traits, challenges, context), and generate comic book–style illustrations. Not screenshots of user interfaces. Not wireframes. I want to step back and show the human experience: what life looks like before and after the feature exists.

Building the Pipeline

A couple of days ago, I decided to try this with Claude Cowork. I explained what I was going after: give it epics (collections of stories) and have it suggest how to illustrate them. Not every detail, just the moments that drive the main points home. I mentioned that I have a Gemini AI Plus account, so we could use their image generation model as an option.

From there, Cowork worked through a plan. First, I prompted Gemini separately, asking it to describe the image model’s capabilities and what another AI tool generating storyboards would need to know to write good prompts for it. I took that output and gave it to Cowork, and it built out the full pipeline.

The workflow goes like this: Cowork takes an epic with multiple user stories and produces a storyboard file. That file includes a character reference sheet (to keep characters visually consistent across panels), scene descriptions for each panel, and image generation prompts. It then pauses so I can review the markdown, make revisions, and adjust anything before the images are generated.

I took that markdown and used a Gemini gem I built, a mini-application I configured with instructions for what to do with the file. It reads the character sheets, scenes, and prompts, and generates the panels one by one.

The output is an HTML file with the comic book pages, including some nice interactive effects: panels that pulse or animate on hover. I downloaded the HTML and the individual image files from Gemini.

I tried automating the download step, but I haven’t finished that yet. I’m keeping focus on getting the rest of the pipeline solid first.

From Images to Slide Deck

Once the HTML and images are saved, I go back to Cowork and tell it they’re ready. It then takes those image files and builds a PowerPoint slide deck with four slides:

-

The title of the story

-

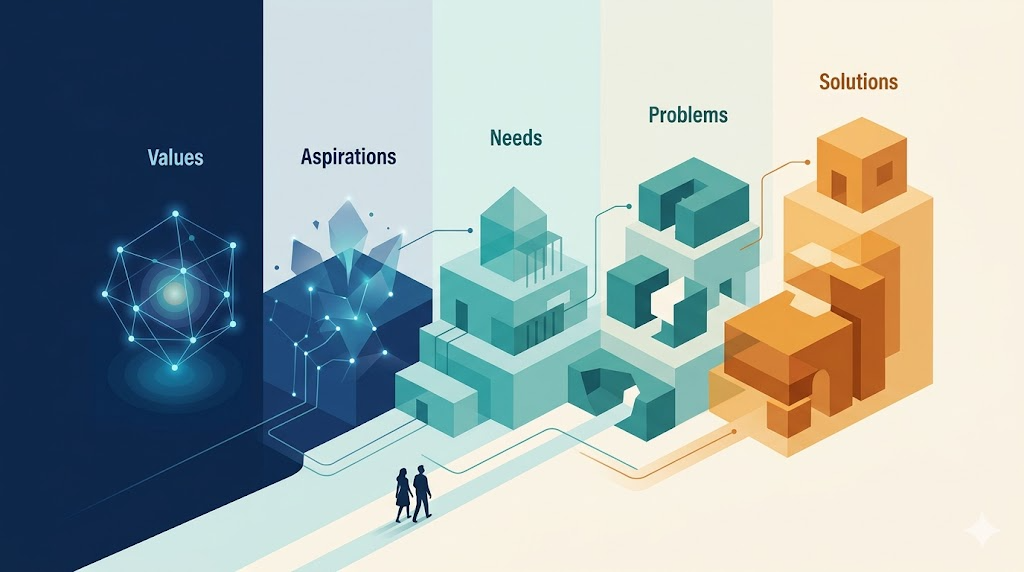

The “before”: the problems and pain points that exist without the feature

-

The “after”: the value and benefits the personas experience once the feature is implemented

-

A summary slide with the key takeaway

After the first epic was done and refined, I asked Cowork to create a skill from that workflow so I could reuse it consistently. It did. Since then, I’ve used it to illustrate several more epics, and I’m liking what I’m seeing.

The Full Flow

The end-to-end flow now looks like this: a problem statement from either a voice recording I transcribed or a stakeholder meeting transcript → my existing workflows to produce user stories and personas → Cowork to generate the storyboard → the Gemini gem to generate the comic book panels as an HTML page → optional manual refinement of individual panels → back to Cowork to produce the PowerPoint deck.

That deck can go directly into a presentation or get emailed to someone. And it lets me do what I’ve been aiming for all along: show and tell. Not just describe the problem, but show it. Not just argue for the value of a solution, but show that too.

Story-showing alongside storytelling.

Leave a Reply