We started this week’s conversation thinking about tracking work. Matthew has been building Mardi Gras, an open-source tool for tracking what agents are working on. I’ve been approaching the same problem from a different angle: tracking what I’m working on, with AI as part of my process.

That difference matters more than it might seem at first.

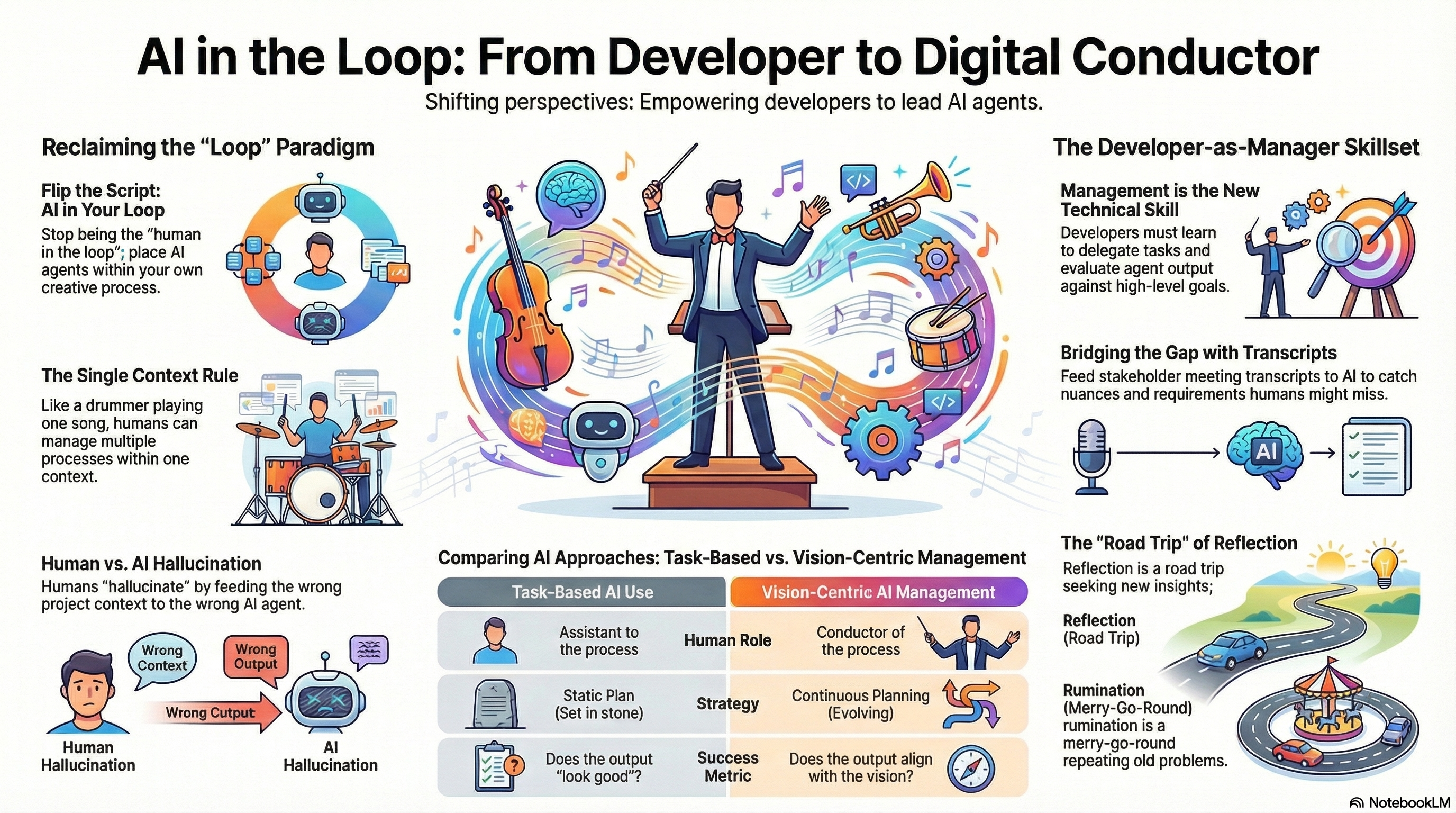

The phrase “human in the loop” keeps coming up in conversations about AI-assisted development. But something about that framing bothers me. I’m not the human in the AI’s loop. The AI is in my loop. It’s my process, my work, my context. The agents are there to help me, not the other way around.

Playing Multiple Songs at Once

We talked about what happens when you try to manage multiple work streams simultaneously. I used the analogy of playing drums: you can work the kick drum, the snare, the cymbals, even sing at the same time. But all of that is within the context of one song.

Try to play two different songs at the same time? That’s a different story.

Nobody can do that. Even if you could technically pull it off with polyrhythms, it would be disorienting for the listener. And exhausting for the person trying to execute it.

That’s how it feels when I tell agents to work on two different work streams. Each work stream has its own context. The agents aren’t confused because they’re only working on their own things. But I’m trying to manage both contexts simultaneously, and that’s where the problem lies.

When Humans Hallucinate

Matthew pointed out something I have experienced: humans can hallucinate, too, especially when managing multiple contexts.

You’re working on two separate tasks with distinct contexts. You need to give an agent a prompt. It’s very easy to paste the wrong thing into the wrong conversation. Maybe you take a screenshot and post it to the wrong chat. The agent tries to make sense of it: “This looks like it’s about Project X, but we’re not in that project.”

If the agent catches it, that’s good. But what if what you’ve introduced is something that can make sense of in the wrong context? It goes off and does work based on incorrect information. You only realize later that you pasted the wrong thing in.

If you’re running long tasks where you give a prompt and step away, you’re basically on the wrong flight, and you don’t know it until you get to the next airport.

The Management Gap

As we explored this further, Matthew made a connection I hadn’t seen: we’re effectively asking developers to do managerial work, and many of us don’t have that experience.

Managing agents means delegating tasks to disparate beings. As a manager, you don’t need context for each individual task they’re working on. That’s their responsibility. You just need the higher-level context of the project itself.

That’s a big gap in our work. There’s an assumption that we’ll be able to manage all these things and have this higher-level understanding. Maybe we do have that understanding, but there may be a breakdown at the management level.

We’re not just writing code anymore. We’re evaluating what agents produce, deciding what the next step is, and holding their output up against the vision to check for alignment.

Vision and Execution

This led us to talk about two distinct skills: managerial execution skills and envisioning skills.

You may be able to manage everything, provided you have a blueprint that tells you the big picture. As solution developers, we need to hone our ability to take someone else’s vision, interpret it, and manage the agents who will execute it.

We have to see how the pieces connect. We have to know how they align with the vision.

If we’re not doing that work, if we’re just giving the output the eyeball test, clicking around to see if it works and looks good, we’re missing something critical. AI is getting better at creating things that look good. There might even be bonus features you didn’t ask for.

But if the output, however pretty it is, doesn’t align with the vision, we have to reject it. We don’t think we’re being diligent about this.

Catching What You Missed

I’ve noticed something interesting in my work. I facilitate conversations with stakeholders, ask questions that unveil needs, and give that to AI to create user stories, UX design, and UI design. Then I tell it to build.

It comes back with something that looks great. But it’s not just that it builds things I never told it to. It captures nuances from the stakeholders’ transcript that I missed when I first heard it.

Matthew pointed out that the AI is reading the conversation differently than we are. It’s catching everything. Its decisions about design and implementation are shaped by what was said in the conversation. But there must be a way to see why those decisions were made, to connect the surprises back to reality.

Designing the Sprint Review

What I’ve been doing is prompting the models before the sprint review: “Remember that story we worked on? Help me demonstrate that to the stakeholder. Give me a step-by-step of what I should show them and where I should stop for feedback.”

The AI catches things the stakeholders weren’t sure about. It builds me a script that says, “Good thing you asked.” I need to design the sprint review to make space for that question. Show it, have the stakeholders react, capture that feedback, and feed it right back in.

We’ve always tried to do this before AI. We’ll hone in on what the stakeholder wants, describe it, and possibly create wireframes. But now we can build a full proof of concept in 30 minutes or a few hours. They can see it, and that increases their confidence. The feedback they share gets fed back in, and we have a more concrete idea of how to proceed.

It’s a less abstract vision than when we started. We started with words, a vision statement describing something in somebody’s mind. Now we have something tangible that we can all see simultaneously.

The Living Plan

We talked about how most projects follow a sprint structure. That’s our human loop. Where do we fit AI into that loop?

The plan we make in the morning might not be the plan by afternoon. Someone calls and says, “That page we’re working on? It needs to be fundamentally different.” How do we jump in and tell an agent to stop what it’s working on and do something completely different?

We have a static view of the work, where the plan is set in stone. Once it kicks off, it’s taken to completion. Only after that will we think of something different.

But it’s a matter of perspective. Are we seeing things through the lens of the plan as something we have to follow? Or are we framing it from the standpoint of a bigger vision and needs?

We’re planning how to achieve that vision at this point in time, given what we know. We execute that plan, then, as quickly as possible, compare what came out to the big vision. We know what the plan was, but if what came out is misaligned with the vision, what have we learned? What are we going to try next?

Don’t focus as hard on the plan. Focus harder on the planning. It’s ongoing. Keep acquiring new information and adjusting accordingly.

Whose Loop Is It Anyway?

Matthew made a point about the language we use. In our efforts to remove ourselves from the process, we’re losing something important.

When we think of “human in the loop,” the language comes from the idea that we’ve offloaded work to agents. It invites us, every once in a while, to give an opinion. But given where things are today, we’re the ones launching these processes. We’re the ones with the final say.

We should think of agents as something that comes into our process. The fact that we don’t is a hint about how we think about this at large.

It’s a hint that ultimately the goal is to remove ourselves from the process. We don’t even see ourselves as fundamentally the controllers of the process. We’re in something else’s loop.

When you hear “human in the loop” and feel it doesn’t sound right, but notice that language comes up in meetings all the time without pushback, that’s also a hint.

I’ve started pushing back. Don’t tell me I’m the human in the loop. The thing is in my loop.

Codifying Knowledge vs Opening Minds

Near the end, I brought up something I’d been reading in Adam Grant’s “Think Again.” There’s a passage about codifying knowledge: it might help us track it, but it doesn’t necessarily lead us to open our minds.

We’re creating all these skills and workflows for AI. We’re codifying our knowledge. Here, AI, do this. Why? Just do it because I told you so.

I’ve stopped thinking as much about creating guardrails for AI. I consider codifying my knowledge based on how I’ve made decisions in the past. When you’re thinking about executing my request, you’re thinking the same way I think when making decisions.

That requires having an open mind. If I’m telling the AI how I decide and asking it to apply those principles, and I tell it to do something that goes against how I normally decide, it should ask me. “You normally decide like this under these kinds of circumstances. Now the scenario is different. I have some questions for you.”

It’s a living process. Just as we acquire new information and make changes based on it, we also have to let older information decay. If a pattern is no longer being used, something I used to do a lot but don’t anymore, that needs to be removed from the codified knowledge.

Reflection vs Rumination

The other passage from “Think Again” that struck me: “The difference between reflection and rumination is whether you are still learning. If you’re pondering a familiar problem without gaining fresh insights, it’s time to seek new information.”

Are you reflecting or are you ruminating?

Matthew used the analogy of motion: both involve moving in a direction, but one is like taking a road trip, and the other is like being on a Ferris wheel. Reflection is the road trip. Rumination is the merry-go-round. There’s motion happening, but you’re not seeing anything new. Everything you see is something you saw in the past rotation.

There’s that definition of insanity: doing the same thing over and over and expecting a different result.

If you’re on a merry-go-round expecting to see something new, I’ve got bad news for you.

This conversation wandered through management skills, vision alignment, living plans, and the difference between reflection and rumination. But it kept circling back to one question: whose loop is this, really?

Watch the full episode to hear how we explored these ideas and where the conversation took us.

Conversations like these happen all the time at Improving. We’re hiring Senior and Principal AI-Enabled Developers. Reach out if you’re interested.

Leave a Reply