I keep hearing “human in the loop” everywhere. I’ve said it myself many times. It’s the phrase we use when talking about AI systems, making sure humans stay involved, that we don’t automate ourselves out of the picture entirely.

But something about it bothers me.

When we say “human in the loop,” we’re framing everything in terms of AI. The AI is doing the work, running the show, and we’re just… there. A checkpoint. A validator. The thing in the middle that needs to approve the machines’ decisions.

It should be the other way around.

The AI in the Loop

What if we flipped it? What if we called it “AI in the loop” instead?

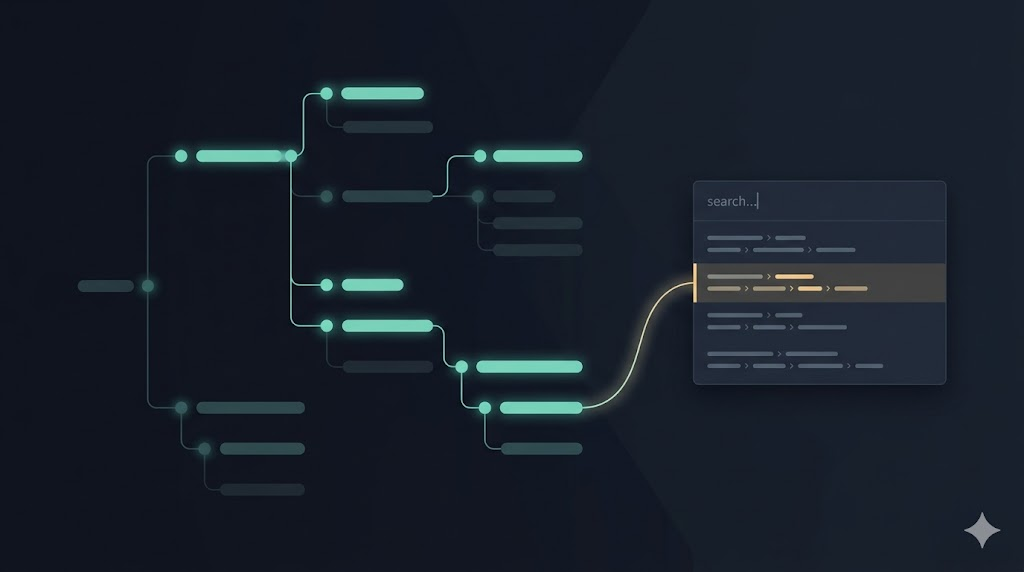

That small shift changes our perspective. Now the human owns the loop. Humans define the workflow, goals, and outcomes that matter. AI becomes a tool we bring in (strategically, intentionally) to help us move faster or handle specific tasks within our process.

This isn’t just semantics. Language shapes how we think. And how we think shapes what we build.

When I wrote about my workflow last year, going from conversations with humans to delivering solutions that satisfy their needs, I was already thinking this way. I was mapping out my loop, the one I control, and figuring out where AI could help. Not where I could fit into AI’s process.

That’s the difference. AI should serve us, not the other way around.

Why Language Matters

I’ve always been particular about language. Not in a pedantic way, but because the words we choose influence how we think and make decisions. And when decisions count, that matters.

Take date formats. In Brazil, we write day-month-year. That made sense to me growing up because the day is what changes most often. It’s the part we forget. The month takes 28 to 31 days to change. The year takes 365 days. So we start with what matters most in daily life.

But ask a software developer, and they’ll tell you year-month-day makes more sense because it’s easier to sort and filter. And they’re right, in that context. When I write journal entries, I use year-month-day because I’m optimizing for later retrieval. The context determines the choice.

Or addresses. In Brazil, we say “Avenida Paulista, 1234.” In the US, it’s “1234 Westheimer.” I always found the US format odd because if someone passes out mid-sentence after saying “12536” when asked for an address, you have nothing useful to work with. But if they say “Westheimer,” you can at least find the street.

We start with what’s most valuable given the context.

Human Stories, Not User Stories

This same thinking applies to how we write user stories.

The standard format is “As a [role], I want [feature], so that [benefit].” But for a long time, I preferred flipping it: “In order to [benefit], as a [role], I need [feature].”

Start with the outcome. Start with what matters. The “so that” part is the North Star: it’s why we’re building anything at all. Leading with it keeps us focused on value, not features.

And while we’re at it, maybe we should stop calling them “user stories” altogether.

When we say “user,” we reduce people to their interactions with our product. But there’s a whole life beyond that. A person with goals, challenges, relationships, and context that extends far beyond our software.

What if we called them human stories instead?

A human story is the story that a person would tell someone else. “I was dealing with this challenge, and then I used this product, and it helped me achieve what I needed.” That’s the story we should be enabling. Not “as a user of the system,” but as a person living their life.

Principles Over Guardrails

Back to AI. We talk a lot about putting “guardrails” on AI systems. Rules to keep them from doing the wrong thing.

But guardrails are reactive. They’re boundaries we set after the fact.

What if we gave AI our principles instead?

Principles are how we make decisions. They’re the reasoning behind our choices. If AI understands how we think (what we value, what trade-offs we make, what context matters), it can help us better.

Not by following rigid rules, but by aligning with how we already work.

When AI has context and principles, it can evaluate decisions faster. It can surface options we might not have considered. It can handle the routine parts of the loop, so we can focus on the more interesting, more important problems.

But only if we frame it correctly. Only if we remember that it’s our loop.

Context Is Everything

Language only works when we understand the context. A word that’s perfectly normal in Brazilian Portuguese might mean something entirely different in Spanish, even if it sounds identical.

The same is true for how we frame our work with AI.

“Human in the loop” suggests we’re optional. A formality. A speed bump in an otherwise automated process.

“AI in the loop” reminds us that we’re in control. That AI is a tool we choose to use, in specific parts of our workflow, for specific reasons.

It’s a small change. But small changes in how we talk lead to bigger changes in how we think. And how we think determines what we build.

Leave a Reply