Every week, when Matthew and I sit down to record The Blank Page Podcast, I never know exactly where the conversation will go. I only know one thing for sure: if we follow our curiosity, we’ll end up somewhere worth exploring. Episode 7 was no exception.

The Week AI Moved Fast Again

This week brought another wave of AI releases—Google’s Gemini 3, a new AI-powered IDE called Anti-Gravity, and a model with the ridiculous-yet-fantastic name “Nano Banana Pro.” Matthew lit up, describing the new image‑editing capabilities, especially its ability to blend multiple source images into a cohesive composite. It’s the kind of feature that would’ve required specialized tools and hours of effort not that long ago.

Meanwhile, I spent part of the week experimenting with book‑cover concepts. I moved between ChatGPT, Gemini, SnagIt, and back again—nudging, refining, iterating. The early results weren’t great. But then, suddenly, they were. The shift wasn’t dramatic; it was subtle. A little more polish here, a little better structure there. I enjoy those small steps forward. It’s the feeling of “Ah, now we’re getting somewhere.”

Tools Are Only Interesting When They Solve a Problem

As Matthew explained why he loves new tools, I was reminded again of something I’ve been repeating for years: it’s not about the tool—it’s about the problem (with that said, I’ve seen some great ways Matthew puts the shiny toys to good use!)

New IDEs are fun to try, but if the one I already use gets the job done, that’s where I stay, at least until a real need emerges or when I set time to experiment with them.

That’s why I don’t chase every shiny thing. Instead, I keep a catalog of problems I want to solve. If a new tool gives me leverage, I’m ready.

Sometimes the tool doesn’t even need to be the perfect one—it just needs to work well enough.

When “Good Enough” Means “Go”

A great example came this week. I wanted a quick survey for an internal session—not a full-blown form, not a polished UI, just a place to capture answers. Instead of opening yet another form builder, I gave Gemini a markdown list of questions and said, “Create an app for these.” One minute later, I had a working, shareable mini‑app.

No friction. No overhead. Just done.

Moments like that still surprise me. They shouldn’t, not after everything we’ve seen in the last two years—but they do.

Prototypes in Minutes, Not Weeks

The part of the conversation that resonated most with me was the discussion of how AI accelerated a recent User Acceptance Testing effort. All the sessions were recorded. I knew I’d be able to revisit the transcript later, reflect on the discussions, and dig deeper into the wording, reactions, and sentiment.

By the time the meeting ended, I had several ideas to explore. I dumped the transcripts and codebase context into Cursor and asked it to create a plan. I expected it would take half an hour to build the prototype.

Cursor did it in five minutes.

Not a perfect solution. Not even a final one. But a tangible proof‑of‑concept—something I could run, test, and refine.

Within a few hours, I had a working prototype to show the stakeholders. They validated it right away. And only then did I begin thinking about implementation details.

The speed of that loop—idea → plan → prototype → validation—still blows me away.

Diverge, Converge, Repeat

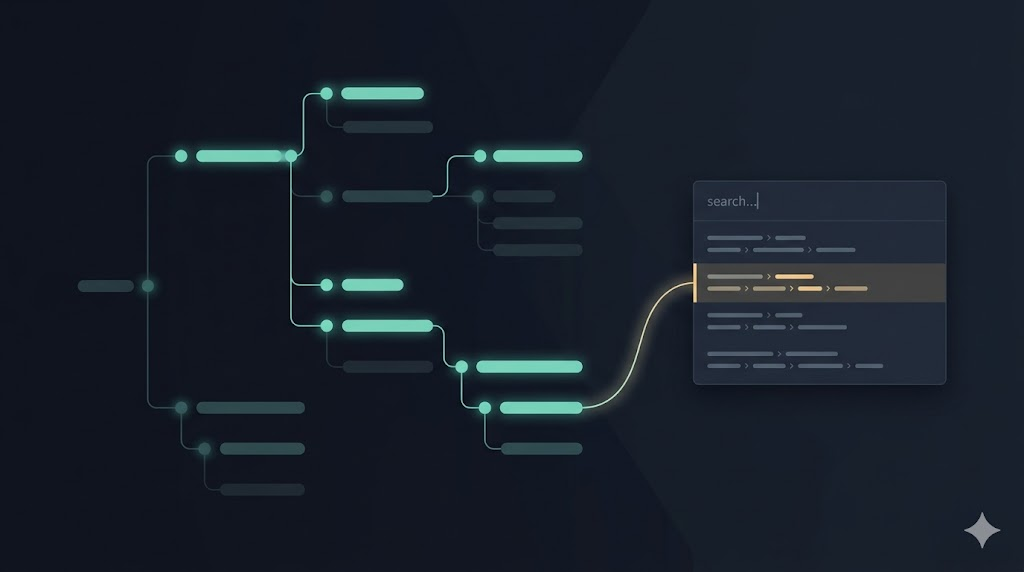

We talked about design thinking, and how AI is becoming a natural partner in the diverge–converge cycle:

- Diverge into possibilities.

- Converge into a clear direction.

- Diverge into solutions.

- Converge into the next step.

It’s the same idea I use with humans: get multiple perspectives, compare them, merge the best parts, and refine again. AI makes the loops faster.

And the comparison with humans matters. Matthew doesn’t ask a single model for an answer. He asks multiple models for their perspectives, then has them read each other’s plans, poke holes in them, and incorporate improvements. It’s the closest thing we have to creative collaboration in software.

The Strange, Useful Imperfection of Memory

Somewhere along the way, our conversation drifted—beautifully—into the nature of memory. Human memory. Machine memory. The drift that happens over time.

Models remember things across conversations, sometimes helping and sometimes confusing. Humans do the same. We reconstruct memories from fragments, fill gaps with stories, and treat the stitched‑together narrative as fact.

But as I’ve been revisiting my own 20 years of blog posts, I’ve been reminded how important it is to:

- Capture snapshots of what we believed.

- Revisit those snapshots with new knowledge.

- Notice the drift.

- Update the beliefs.

That’s the heart of my weekly Back to the Spiral newsletter. Past → Present → Future. What I’m doing now, what I’ve done before, and where I think I’m headed—a self‑reflection loop powered by notes, transcripts, old talks, journals, and weekly pause points.

AI accelerates our thinking. But reflection anchors it.

Language, Culture, and the Drift of Meaning

From AI memory, we drifted into human language—how words evolve, how meanings shift, how culture shapes our vocabulary.

We laughed about Portuguese speakers using English loanwords for things that already have perfect Portuguese equivalents. But underneath the humor was a deeper point: nothing stays fixed. Language drifts. Beliefs drift. Cultures drift.

Even our memories drift.

Which is why capturing our thoughts matters. Because the moment will not come back. At least not in its original shape.

What Endures

As always, we closed with a discussion of storytelling. Effects fade. Tools become obsolete. Features get replaced.

But stories carry forward.

It’s why a book like Fahrenheit 451 still hits hard today. And why, when Matthew and I record these episodes, I’m reminded again and again how meaningful it is to sit down, hit record, and talk.

Because somewhere between curiosity, reflection, and conversation, we stumble into the insights we didn’t know we were looking for.

If this episode sparked a thought, question, or tangent you want us to explore, let us know. The Blank Page is always open.

Leave a Reply