I worked on a project years ago where the product owner was stretched thin across multiple initiatives. She had the title, but not the bandwidth. So I started helping with the backlog: writing stories, refining them, making sure they reflected the problems we were trying to solve. Then I’d bring them back to her for validation.

I didn’t think much of it at the time. It was just something that had to happen.

What the 2020 Scrum Guide Changed

I didn’t take a formal Scrum class until around 2024. Before that, I’d taught Scrum and agile practices, skimmed the Scrum Guide, and mostly just worked on projects and got things done.

When I finally took that Scrum Master class, I learned that the 2020 Scrum Guide had made a significant shift. Product owners, developers, and Scrum Masters were no longer described as roles. They were described as accountabilities.

That distinction hit me immediately. These aren’t job titles assigned to specific people; they’re things that have to happen on every Scrum team, regardless of who does them.

I’d already been living this. I’d written stories when no product owner was available. I’d coached teams when there was no Scrum Master. I handled QA, UX design, and code reviews because those tasks were necessary, and I had the experience to contribute.

The Scrum Guide was finally describing what I’d experienced: these aren’t roles you play. They’re responsibilities the team must fulfill.

Stories Aren’t One Person’s Job

I wrote a blog post years ago called User Stories Are For Everybody. I even turned it into a talk. The core idea: writing and refining stories isn’t just the product owner’s job. It’s an accountability the whole team can share.

When we empower the entire Scrum team to think about stories, we multiply perspectives. More people are on the lookout for valuable work. More people understand the problems we’re trying to solve.

We can scale this further. During sprint review, when we present what we’ve built, we’re telling stories. And as we gather feedback, we can help stakeholders tell their stories: how our work solves their problem, or what needs to change for it to work.

I’ve coached customer support teams the same way. Instead of reporting “users are clicking button X and getting an error,” they can tell the story: what the user was trying to do, what they expected, and the business context. That story helps developers address the issue far more effectively.

And we can push it one step further. When customer support receives a request from a client, they can guide the conversation to elicit the client’s story. You don’t ask end users for requirements. You ask them to tell a story: What were you trying to do? What did you expect? What was the need?

Once I hear that story, I can retell it to the next person, adjusting it with context that helps them build on it.

Where AI Fits Into the Loop

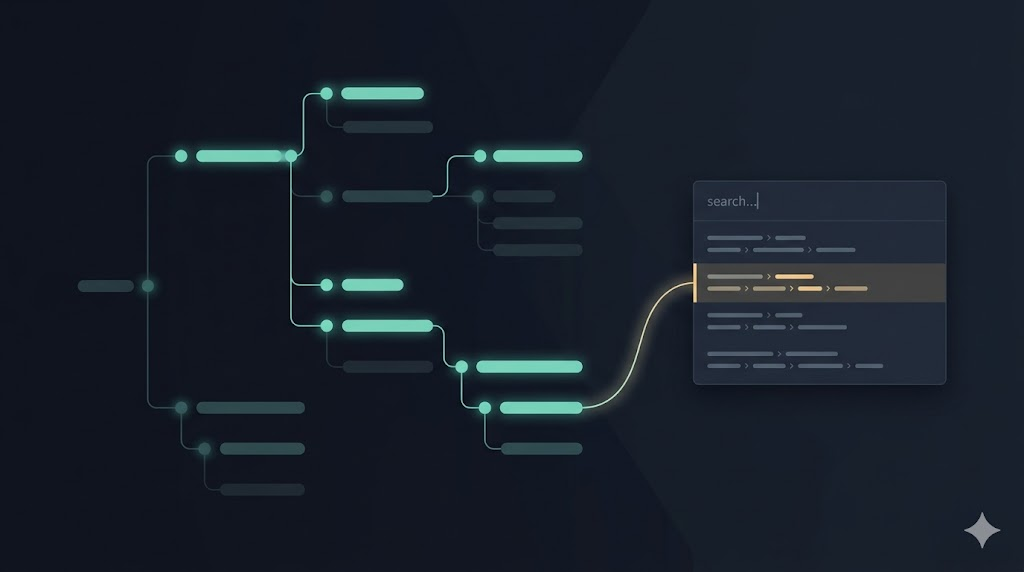

When we think about how AI assistance fits into our work, we can ask: How might AI help us in this loop? And when AI goes into its own loop, how does it interact back with us so that it returns to our loop, the most important one?

Through that lens, extracting stories from conversations, facilitating those stories, asking the right questions to achieve the desired outcome — those are areas where AI tools can genuinely help.

As we think about accountabilities, the things that need to happen, figuring out the most valuable items for the backlog, how we prioritize and describe and refine them: those are things that have to happen. And those are ways we can get AI to help us, regardless of our job title.

Somebody fully dedicated to writing code can leverage AI to identify valuable stories, refine those stories, ask better questions, and then have the conversation with the people accountable for making those decisions. Because all of that has to happen regardless of who does it.

Codifying How We Work

There’s another mindset shift here: think not about who’s supposed to do what, but about what has to happen. What are the distinct things that need to happen? And why do they need to happen that way?

As I’ve been leveraging AI assistance, I’ve found this approach beneficial. I identify what has to happen, understand why, codify that, and give it to AI. When I facilitate conversations or articulate findings in user stories, acceptance criteria, and scenarios, I codify how I do that so my AI agents do it the way I normally would.

For instance, I don’t think of them as user stories. I think of them as human stories. I don’t put technology-specific things in the story because I’m trying to help people, not automated systems. Even if what brings value is optimizing an automated system, that’s not because I’m being nice to the system. I’m bringing more value to the people who will leverage those improvements.

That’s important to codify. It’s something that has to happen. And we can show our AI agents how we prefer doing it.

What are the accountabilities in your work that you’ve been treating as someone else’s role?

Leave a Reply