Two new developers are joining our project. I want them productive quickly, but more than that, I want them to understand why the code exists, not just what it does.

The traditional approach would be a whiteboard session: “Here’s the architecture. Here’s the domain model. Here’s how events flow through the system.”

They’d nod. Take notes. Maybe even understand the structure.

But would they understand why we built it this way? Would they know which features produce which data? Would they see the human needs behind the technical decisions?

I needed a different approach. One that wouldn’t make me the bottleneck for every question.

The Problem I’m Solving

When a new developer joins a project, they face a knowledge gap. Not just “how does this code work?” but “why does this feature exist?”

I could spend hours walking them through the codebase. Explaining event sourcing. Showing them projections. Tracing data flows.

But that doesn’t scale. And honestly, I don’t remember all the details either.

What if instead of me explaining the system, I could give them a way to explore it themselves? A way that connects user actions to code, business needs to technical implementation?

Building a Feature Analysis Workflow

I created an AI-assisted workflow that answers the question: “What does this screen do, and how does it work?”

You give it a screenshot or URL. It analyzes the feature from multiple angles:

User perspective: What problem does this solve?

Frontend: Which components render this screen?

Backend: Which API endpoints provide the data?

Data flow: Where does this information come from?

Events: Which domain events populate this data?

Features: Which user actions trigger those events?

Tests: Where are the tests that verify this behavior?

The AI traces the entire path from user action to the database, then generates comprehensive documentation.

How It Works

I ran the workflow on our inventory screen. Here’s what happened:

I logged into the system as a test user. Placed a purchase order for apples and corn. Checked inventory levels. The system showed zero on hand today, but quantities are expected tomorrow.

Then I simulated the truck arriving early. Marked the purchase order as received. The inventory numbers were updated immediately.

I gave the AI a screenshot and prompted it with the new workflow, “analyze this feature”.

The AI:

-

Found the Angular route and components

-

Traced the API endpoint (

GET /api/v1/warehouse/inventory) -

Located the backend query handler

-

Analyzed the read model projection

-

Identified 11 different domain events that affect inventory

-

Mapped those events to user-facing features

-

Found all related tests (frontend and backend)

-

Extracted Given-When-Then scenarios from test code

-

Generated a complete technical analysis document

What the AI Discovered

The analysis revealed that the inventory feature isn’t about displaying numbers. It’s about coordinating information across the business.

When a shipment arrives early, that affects:

-

What the warehouse team can pick and pack

-

What the sales team can promise to customers

-

What the finance team expects in cash flow

-

What the purchasing team plans for future orders

The AI traced all of this. It found that inventory data comes from:

-

Purchase orders (creates lots, increases “due in”)

-

Receiving shipments (increases “on hand”)

-

Sales orders (increases “unallocated”)

-

Pick tickets (increases “allocated”)

-

Customer invoices (decreases “on hand”)

-

Manual adjustments

-

Customer returns

-

Order cancellations

Each of these is a separate feature. Each produces domain events. Each affects the inventory read model in specific ways.

The Unexpected Discovery

The AI found dead code.

It identified “pending transactions” logic in both the frontend and the backend. Code that no longer represents how the system works.

I remembered the story. We used to handle certain transactions differently. We changed the approach a while ago. But we never removed the old code.

A new developer running this analysis would surface that finding. They’d ask: “What are pending transactions?”

I’d explain the history. Then we’d create a plan to safely remove the obsolete code.

The AI can’t make that decision. But it can raise the question. And that’s valuable.

The Value Proposition

This workflow saves time. Not just for new developers, but for me.

Instead of spending two hours walking someone through the inventory feature, I can say: “Run the analyze-feature workflow on the inventory screen. Read the generated documentation. Then let’s talk about what you found.”

They get:

-

A complete technical analysis

-

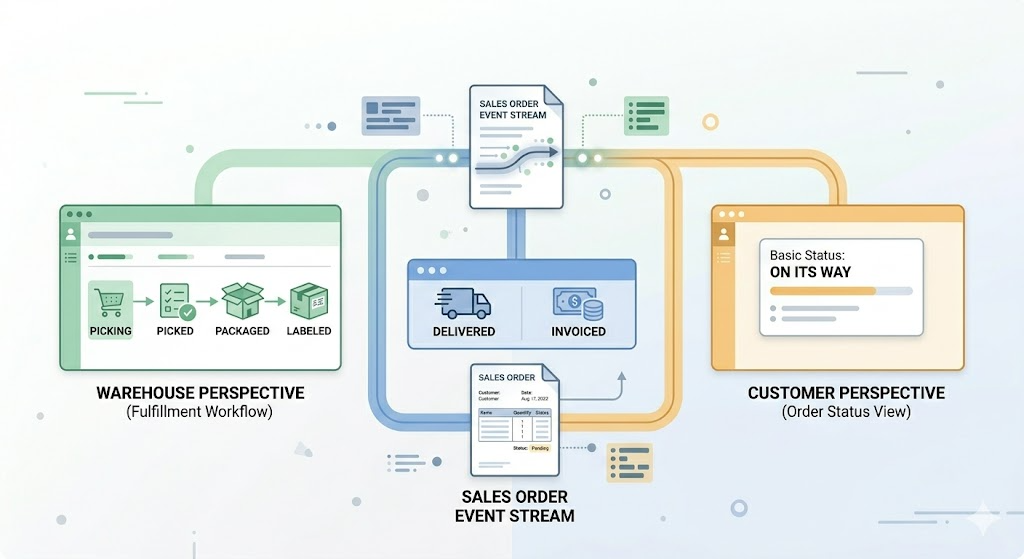

Data flow diagrams

-

Event-to-feature mappings

-

Test locations with Given-When-Then scenarios

-

A starting point for deeper questions

I get:

-

Time back

-

Better questions from developers who’ve done their homework

-

Documentation that stays current (regenerate anytime)

-

Insights I might have missed

What I’m Learning

Building this workflow is a great example of AI assistance amplifying my ability to share my expertise.

I still need to explain why we chose event sourcing. Why we separated read and write models. Why certain business rules exist.

But the AI handles the mechanical work: tracing code paths, finding files, extracting test scenarios, mapping dependencies.

It’s like having a junior developer who’s really good at grep, but needs guidance on what matters.

The Bigger Pattern

This isn’t just about onboarding. It’s about making implicit knowledge explicit.

I’ve been on this project for some time. I know how it works and its history. But some of that knowledge lives in my head.

When I leave, or when the team grows, that knowledge needs to be transferred.

Traditional documentation goes stale. Comments get outdated. Wikis become graveyards of obsolete information.

But a workflow that generates documentation from the current codebase? That stays relevant. Run it again, get an updated analysis.

The Human Element

Here’s what the AI can’t do:

It can’t tell you why we built it this way instead of another way.

It can’t explain the business context that drove the design.

It can’t share the lessons learned from previous attempts.

It can’t make judgment calls about what to change.

That’s still my job. That’s still human work.

But the AI can prepare the ground. It can gather the facts. It can surface the questions worth asking.

Then we have better conversations.

Where This Goes

I’m experimenting with this approach for onboarding. I don’t know yet if it will work as well as I hope.

But I enjoy leveraging these tools to articulate the why behind the code, not just the what, leading to or answering the questions that matter most. It’s another example of using AI as a thought partner, not just a code generator.

And I’m building a system where knowledge doesn’t live in one person’s head.

That’s worth the experiment.

Leave a Reply