Near the end of a session on end-to-end testing, one of the developers said something that made me pause:

“So we avoid the refactoring? Like, we avoid having to refactor after a long time?”

My answer was something I’ve said before, but it landed differently in that moment:

“It’s not that we want to avoid refactoring. It’s that we’re going to decrease the surface of things that need refactoring.”

That’s the distinction. And the reason that distinction matters now more than ever is AI.

Stories Are Not Requirements

I’ve assimilated this lesson years ago: user stories are not requirements.

Requirements are what someone hands you and tells you to build. Stories are what someone tells you to help you understand. The difference is not semantic. It shapes how the work gets done.

Nobody says, “Let me tell you a requirement.” People tell stories. And when a team sits together and tells the story of a warehouse manager adjusting inventory, they start asking the right questions. What happens when you try to decrease by more than what’s on hand? Is that common? Is it a hassle in the current system? Does the business have a specific process for it?

Those questions don’t come from a requirements document. They come from a shared conversation around a story. And those conversations, before any code is written, create the clarity that everything downstream depends on.

What AI Actually Multiplies

AI tools are multipliers. That’s the accurate word for it. They take what you give them and make it faster to deliver results.

Which means they also multiply whatever problems are in your input.

A vague story with undefined edge cases produces vague implementation guidance. The AI will fill the gaps because it has to. You’ll get code that compiles and maybe even runs, but doesn’t reflect what the users actually need. And then you refactor.

A well-crafted story, written in Given/When/Then with clear scenarios and documented edge cases, is an entirely different input. When AI is trained on that, it knows how to design the endpoints. It knows how to implement the UI and backend in a way that aligns with the business intent. It knows which tests to write and how to name them.

The story trains the AI. The AI designs and implements. The refactoring surface shrinks.

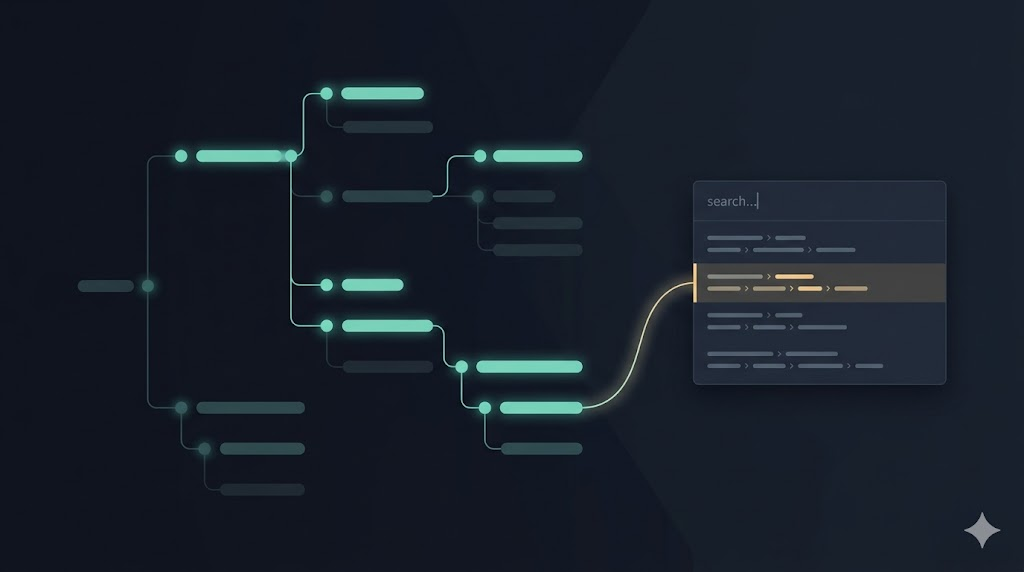

The Cycle Is Already Closing

During that session, I also showed a workflow that takes a finished feature, runs its end-to-end tests with screen recording, and automatically produces stakeholder-friendly documentation: screenshots, descriptions, and context pulled from the test scenarios. I shared about that workflow in From Cypress to Playwright, BDD and the Loop That Closes.

That workflow starts from the story.

The story shaped the Given/When/Then scenarios. The scenarios shaped the end-to-end tests. The tests ran and produced recordings. The workflow extracted the frames and wrote the document.

So the story doesn’t just help before the code. It echoes through the entire lifecycle, from conversation to deployment to UAT documentation.

One of the developers noticed it: “So we’re closing the loop.”

Yes. And AI is what’s making that loop tighter than it’s ever been.

Decreasing the Surface

When I talk about reducing the refactoring surface, I’m not talking about eliminating change. Change is healthy. Stakeholders see a feature in production and discover an edge case they didn’t anticipate. That’s normal. We cut a new story and go through the whole cycle again. We embrace change.

What I’m talking about is the unnecessary refactoring: the kind that happens because nobody truly understood the feature before building it. Because the story was vague enough that everyone assumed the same thing, until the output revealed they’d all assumed different things.

That’s the surface we’re shrinking.

And as the loop tightens, as the feedback cycle between story, implementation, and human validation gets shorter, we spot those gaps earlier, before they become something to untangle in the middle of a sprint.

Where the Test Distribution Points

In a recent post on outside-in development, I described how the distribution of tests across layers tells a story of its own. We started outside-in and front-heavy because we were building the UI first to show stakeholders and get early feedback. Then, as the features stabilized, the backend tests grew to nearly match the frontend. And over the last several sprints, the end-to-end line has been quietly going up.

AI is making those tests easier to write. And the better the stories driving them, the more accurate the tests become.

That’s the multiplier at work. Not in the AI itself, but in what you give it to work with.

Leave a Reply